The Integrity of Metric Labs

Raw data is not intelligence. At Canton Metric Labs, we apply a rigorous mathematical filter to every dataset before it enters our analytics environment. Explore the specific verification protocols that protect the accuracy of your business insights.

Phase One:

Structural Scrubbing

Validation begins with the geometry of the data. We identify outliers, missing coefficients, and structural anomalies that skew traditional market analytics.

Schema Synchronization

Before any testing occurs, datasets are mapped against our internal proprietary schema. This ensures that temporal data points from disparate sources—such as consumer behavior metrics and logistics costs—align on a standardized timeline. Without this synchronization, analytics often produce "ghost correlations" that lead to expensive strategic errors.

Verification Thresholds

Logical Consistency

We cross-examine data against established market physics. If a dataset suggests a 400% growth in a saturated Sydney sector within 24 hours, our systems flag this for manual forensic review.

- • Internal cross-referencing

- • Historical trend bounding

- • Anomaly detection triggers

Statistical Weighting

Precision requires proper balance. We adjust for sample bias and volatility indices to ensure that a single noisy variable doesn't dominate the final output of the analytics engine.

- • Bias-correction algorithms

- • Confidence interval mapping

- • Variance compression

Integrity Auditing

Every metric lab output is timestamped and cryptographically hashed. This creates an unalterable audit trail from the raw ingestion point to the final strategic recommendation.

- • Immutable lab logs

- • Hash-sum verification

- • Chain of custody tracking

"In the realm of market analytics, speed is a liability if the foundation is fractured."

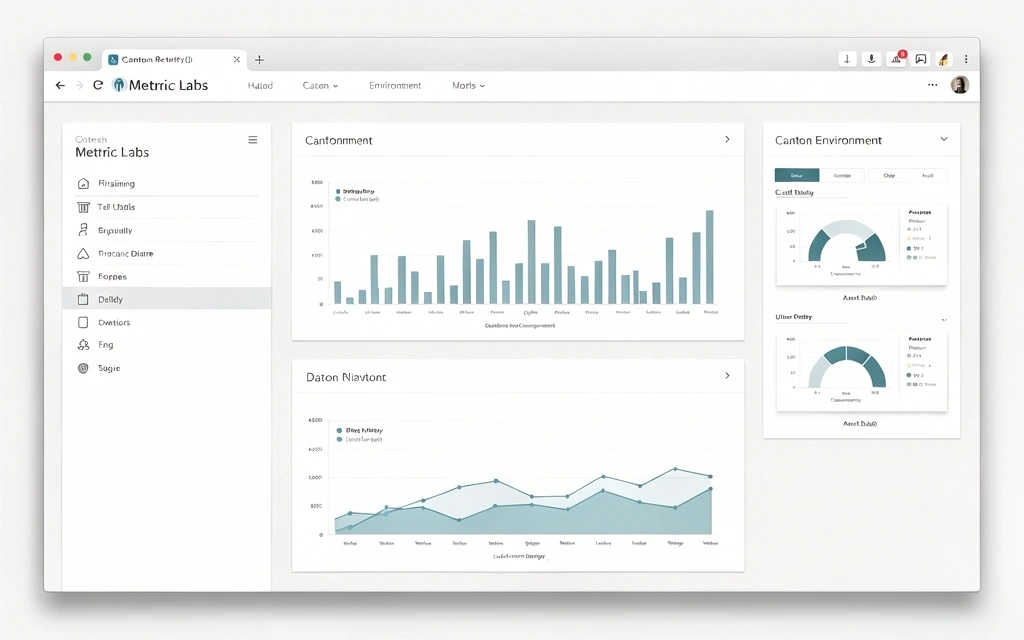

Our validation process is not a bottleneck; it is a filter for quality. Unlike off-the-shelf software that blindly processes whatever it is fed, Canton Metric Labs acts as a guardian of your decision-making data. By the time a metric reaches our visualization layer, it has survived three distinct layers of mathematical scrutiny.

This institutional approach is why local Australian businesses trust us with high-stakes financial data. We don't just report numbers—we verify their legitimacy.

Calibration Accuracy

Ingestion Filter

Every API connection or batch upload is checked for metadata parity. We ensure that the source of the data matches the expected origin profile, preventing data pollution from unverified third-party scrapers or corrupted exports.

Internal Consistency Cross-Check

Large datasets are subdivided and checked for internal correlation. Any variance larger than 1.5 standard deviations from the expected laboratory benchmark triggers an automated re-scan and manual lab technician review.

Final Validation Export

The resulting report includes a "Reliability Score" for the specific dataset. This allows stakeholders to understand the statistical confidence behind the suggested market moves.

Ready for cleaner intelligence?

Stop making high-stakes decisions based on unverified market signals. Let Canton Metric Labs apply our validation standards to your next initiative.

Canton Metric Labs © 2026

Verified Analytics Environment v4.12.0